Ongoing Projects

Exceptions handling in Artificial Intelligence Applications

Validation of AI algorithms for Cancer Detection

Deep Learning for Differential Diagnosis of Lung Cancer

Evaluating Cardiac Risk in Breast Cancer Patients using Artificial Intelligence

Development and Validation of Image-based Breast Cancer Risk Prediction Model

Investigation into Federated Approaches to Machine Learning

Siamese Neural Network for Early Detection of Breast Cancer Using Longitudinal Screening Mammograms

Exceptions handling in Artificial Intelligence Applications

The objective of this project is to develop an exception handling algorithm for enabling the application of artificial intelligence (AI) algorithms for screening mammography studies that consists of more than four view mammography images.

A screening mammography study consists of Four views: Left Craniocaudal (L CC), Left Mediolateral-Oblique (L MLO), Right Craniocaudal (R CC), and Right Mediolateral-Oblique (R MLO). Artificial intelligence (AI) algorithms have been developed to use all four views to detect mammographic abnormalities that may indicate the presence of a breast cancer. However, over 5% of the screening mammography studies carried out at our institution consists of more than four images corresponding to the four views. Some of these occur due repeat imaging of the same mammographic view. These repeated images are taken as the first image may not be clinically acceptable due to motion artifacts, inadequate tissue inclusion in the image or due to artifact. Furthermore some women have larger breasts that cannot be imaged in a single view due to the physical size limitation of the digital detector.

In this study, we are proposing to quantify these exception studies that contain more than four mammography images. Once they quantified, we will develop methodology to assign the rationale for the exception (repeat image or tiled image). In the case of tiled images, we will develop an algorithm to generate a combined single image of the same image size by combining the tiled images. In the case of repeated images, we will develop an algorithm to identify the rationale for the repeat image by comparing the two images and then select the correct image, in most cases the second image.

Development and testing of this type of algorithm is highly valuable for future application of AI systems to improve breast cancer detection in the breast screening program.

Validation of AI algorithms for Cancer Detection

The primary objective of this study is to evaluate the performance of AI systems for breast cancer detection using the digital mammograms from the breast screening program.

Recent studies have found that double reading of mammograms can improve cancer detection compared to traditional single-reader assessment. Consensus discussion or arbitration of discordant readings between readers can also reduce the recall rate for further assessment while maintaining high accuracy in cancer detection. However, independent double readings and consensus meetings require greater up front cost for the healthcare system, and radiologist availability. Artificial Intelligence (AI) may be uniquely poised to help with these challenges. Studies have demonstrated the ability of AI to meet or exceed the performance of human experts on several different medical image analysis tasks.

AI algorithms used in this way could help make risk predictions about the likelihood of breast cancer. Using these algorithms as independent peer reviewers has the potential to outperform radiologists alone while reducing radiologist workload. Another method of reducing workload using AI involves triaging screening mammograms based on risk. One recent study using a commercial AI algorithm found that triaging higher-risk screening examinations for enhanced assessments versus a no radiologist stream for low-risk scores (the bottom 60% low-risk scoring mammograms, in this context) reduced radiologist workload by more than half while detecting cancers that would otherwise have been diagnosed at a later time. We then plan to compare the predictions of the systems to those made by breast screening radiologists in routine clinical practice and pathologically confirmed breast cancer diagnosis.

We believe this work will pave the way in using AI models to aid breast cancer detection in routine clinical care in British Columbia.

Deep Learning for Differential Diagnosis of Lung Cancer

This project seeks to develop a deep learning methodology for characterization of malignant and normal lung tissues for early detection and treatment of lung cancer.

Deep neural network machine learning techniques have been successfully applied in numerous medical contexts, including diagnostics, radiology, and pathology. Radiology in particular has found many transformative uses for deep learning, specifically in classification tasks. Deep neural networks are a type of machine learning model which employ multiple processing layers to automatically detect, identify, or classify features of interest present within a dataset. The potential of these models has been greatly expanded in recent years with the application of transfer learning. Transfer learning is a technique that utilizes a previously trained deep learning model to improve upon another model being trained on a related task. Training is performed on an initial dataset, allowing the network to adjust its internal parameters before being trained on a related target dataset. This strategy is useful when there are limitations in the target training data such as rarity, inaccessibility, or expense of data collection. Deep learning models subject to transfer learning can avoid the problem of model over-fitting and can perform to higher standards than models trained on the target dataset alone.

In this project convolutional neural networks (CNNs) will be trained on a dataset of pathology due to their proficiency at image classification tasks. The initial training dataset will be composed of pathology images of lung tissues that have been ground truth annotated by a pathologist. To train the CNN, pathology images will be used as inputs, and ground truth annotations will be compared to the CNN outputs, allowing the network to adjust its parameters accordingly.

A dataset of pathology images from the same cohort will be excluded from the training data and used to test the accuracy of lung tissue classification performed by the newly trained neural network. Once the network performs to a pre-determined standard, transfer learning will be applied. The CNN will then be re-trained with micro-CT images of lung tissues. Micro-CT images that have been ground truth annotated will be used as network inputs and outputs for transfer learning. A set of micro-CT images from the same cohort will be excluded from the training data and used to test the accuracy of lung tissue classification. In this way, an automated deep learning methodology could be developed to serve as an independent validation step for pathologists.

Result from this project could aid with early diagnosis and minimize the amount of patients that are unnecessarily exposed to invasive follow up procedures and the psychological stresses associated with false positive results. Such a methodology would be time and cost efficient for the health care system by serving as an independent validation step for pathologists and reducing the number of unnecessary follow up procedures.

Evaluating Cardiac Risk in Breast Cancer Patients using Artificial Intelligence

The purpose of this project is to use deep learning and natural language processing methodologies to create an automated cardiac risk assessment tool for use in evaluating cardiac risk in breast cancer patients.

There is a known link between cardiac conditions and breast cancer. Women with breast cancer, for instance, may have a higher risk of later developing cardiovascular conditions than those without breast cancer. Further, women diagnosed with breast cancer that have a prior history of cardiovascular disease may be at increased risk of mortality from cardiac causes. Initial findings (not yet published) from BC Cancer find similar results. This increase in cardiac-related mortality may be caused by radiation-induced cardiac toxicity, with increases in heart disease proportionate to radiation dosage to the heart, potentially lasting 20 years or more.

Breast cancer patients undergoing radiation therapy undergo a Computed Tomography (CT) scan of the chest for radiation dose calculation. These CT scans offer an opportunity to estimate coronary artery calcium (CAC) score, an independent predictor of coronary events which improves cardiovascular risk prediction in asymptomatic individuals. If a patient is found to have a significantly high CAC score, they may be referred for formal cardiovascular risk evaluation. However, manually performing calcium scoring on all chest CT scans of breast cancer patients is impractical due to the tedious and time consuming process. Recent developments in artificial intelligence methodologies, such as deep learning and natural language processing (NLP), provide an opportunity to automate cardiac risk assessments for breast cancer patients.

Deep neural networks are a type of representation machine learning model which employ multiple processing layers to automatically detect, identify, or classify features of interest present within a dataset. In recent years, deep neural network machine learning techniques have been successfully applied in numerous medical contexts, including diagnostics, radiology, and pathology. Radiology in particular has found many transformative uses for deep learning across various modalities not only in classification tasks, but also increasingly in segmentation tasks. Automatic semantic segmentation allows for specific objects— such as anatomic structures, tissues, or organs—to be identified, contoured, and labeled, such as in the automatic scoring of cardiac calcification of CT scans.

NLP is a type of machine learning that uses computational techniques to process unstructured free text data into structured data. Applications of this are cross-disciplinary, including uses such as language translation and social media and web mining. Many previous applications of NLP have been used in consumer contexts (e.g. Apple’s Siri, analysing web-based customer reviews). However, in recent years, there has been increased interest in using NLP across medical contexts, in particular for automated mining of electronic health records (EHR), which are laborious and time-consuming to analyse by hand. In the radiation oncology context, NLP can identify cancer cases, attributes, and outcomes, with real-world applications in surveillance and epidemiology.

We believe this work will pave the way in using artificial intelligence models to aid in cardiac risk predictions in breast cancer patients during routine clinical care. Future applications of this work could help prevent cardiac-related mortalities in breast cancer patients and survivors of breast cancer.

Development and Validation of Image-based Breast Cancer Risk Prediction Model

The primary objective of this project is to evaluate the performance of the image-based Artificial Intelligence (AI) algorithm for breast cancer risk prediction. While cancer risk models have been developed since 1989, they have generally relied on classical statistical models and leveraged risk factors like genetics, family history, hormonal information and mammographic breast density. While they are well calibrated at the population level, they are not accurate at the individual level.

Image-based AI models may identify subtle changes in a mammogram that indicate possible development of a sub-clinical cancer, and thereby that lead to its enhanced performance over other traditional risk models. For example, screening mammography has a significant false-positive rate. Such results can be very stressful to patients, and have led to a reduction in a woman’s likelihood of attending subsequent screens. However, false positive mammograms have been found to significantly increase the relative risk of detecting cancer at subsequent screening in three organized screening programs for three different countries (Denmark, Spain, and the United Kingdom). Most importantly, these relative risks are comparable to those attributed to family history, one of the strongest risk factors for breast cancer.

Here in British Columbia, researchers have found that an abnormal mammogram led to an increased risk of future cancer diagnosis by a factor of 1.73. The increased breast cancer risk in women with false-positive tests may be attributable to misclassification of malignancies already present at the time of the screening as observed on a mammogram by an expert screening radiologist.

Of particular interest in the Canadian context is breast screening of First Nations women. Recent studies have found that while First Nations women, on average, have the lowest breast density compared to other ethnic groups, they have an increased incidence of breast cancer. This presents an anomaly as previous studies have found that women with higher breast density may be at an increased risk of developing breast cancer compared with those with lowest breast density.

Results from this project have the potential to personalize the frequency of a woman’s screening based on her individual risk profile and potentially reduce downstream false positive recalls. We believe the use of this type of image-based risk model will eliminate any potential bias against First Nations and other ethnic women, and improve accuracy in predicting breast cancer risk. The results of this study have the potential to inform practice changes for breast screening programs across Canada.

Investigation into Federated Approaches to Machine Learning

A federated approach to machine learning allows a more decentralized workflow to machine learning, such that various partners can more easily and safely participate in deep learning research without exchanging data. The work in this project will pave the way in using artificial intelligence models to aid in routine screening procedures.

We are currently investigating this approach on screening mammogram images, with a cohort consisting of 250,000 patients that encompass roughly 500,000 studies. Work will be done utilizing AWS Cloud services in the Canadian region/zone. The first step in this process will be to validate an existing algorithm on a subset of the cohort. Improvements in performance will be quantified by retraining and validating again, which will be followed by updating the model in conjunction with collaborators by their locally trained model weights.

The goal of this project is to re-validate the algorithm with this federated model and evaluate if a federated learning approach improves or degrades local performance.

Siamese Neural Network for Early Detection of Breast Cancer Using Longitudinal Screening Mammograms

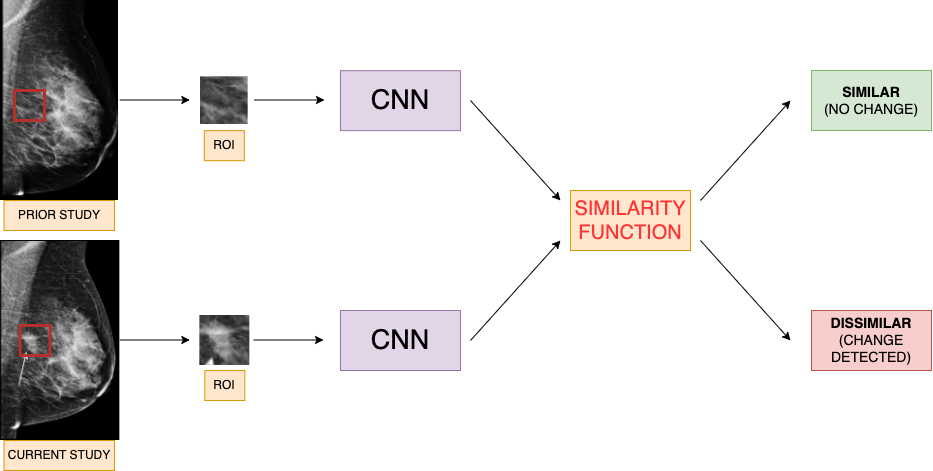

Some of the most successful AI systems to date are convolutional neural networks (CNNs), which can be used to classify a screening mammogram as negative or positive (suspicious for malignancy) based off a single image study. However, the breast radiologist’s workflow incorporates comparison of the current screening mammogram to the patient’s prior image studies before coming to a diagnostic decision. This method of comparison of current to prior studies overall contributes to radiologists’ high diagnostic accuracy. Although this method is integral to the radiologist’s clinical workflow, it is still yet to be broadly incorporated in AI solutions.

This project proposes to build a Siamese neural network (twin CNN) to detect early changes in screening mammograms by comparing serial studies. A Siamese neural network uses two CNNs learning in conjunction with shared weights, and the output determines whether two given images are similar or dissimilar to each other. Clinically, this model better aligns with a typical breast radiologist’s decision workflow. The resulting model will flag mammograms that demonstrate early imaging changes for further diagnostic workup.

Mammography images adapted from: https://healthimaging.com/topics/medical-imaging/womens-imaging/breast-imaging/photo-gallery-what-does-breast-cancer-look

A prior study has built a Siamese neural network that compared whole image mammograms of a patient’s current and prior studies. The disadvantage of using whole images to train a model is that the network trains using medical images that have been resized to much smaller dimensions, losing valuable pixel information in the process. This project instead will be using annotated regions of interest (ROIs) on positive mammograms and matching them with the same ROI on negative studies. There will be no need for resizing, and this will retain all the pixel information contained in the ROI. Ultimately this produces a more accurate model trained on useful image features.